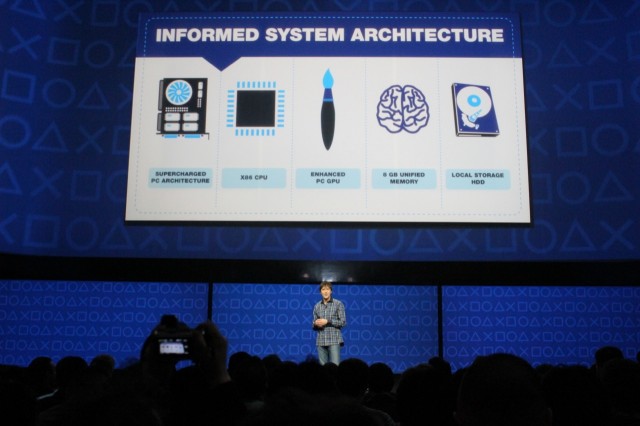

Mark Cerny gives us our first look at the PS4's internals.

By the time Sony unveiled the PlayStation 4 at last night's press conference, the rumor mill had already basically told us what the console would be made of inside the (as-yet-nonexistent) box: an x86 processor and GPU from AMD and lots of memory.

Sony didn't reveal all of the specifics about its new console last night (and, indeed, the console itself was a notable no-show), but it did give us enough information to be able to draw some conclusions about just what the hardware can do. Let's talk about what components Sony is using, why it's using them, and what kind of performance we can expect from Sony's latest console when it ships this holiday season.

The CPU

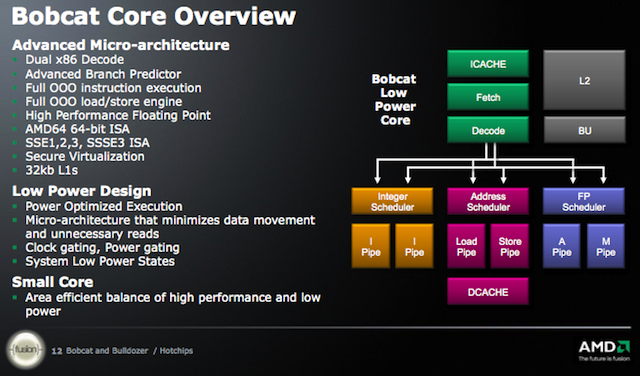

AMD's Jaguar architecture, used for the PS4's eight CPU cores, is a follow-up to the company's Bobcat architecture for netbooks and low-power devices.

We'll get started with the components of most interest to gamers: the chip that actually pushes all those polygons.

The PS4 eschews expensive custom chips like the Cell in favor of one of AMD's accelerated processing units (APUs). This APU shares surface-level similarities with the chips you can pick up for your desktop from Newegg or Amazon, but the details are much different: it combines eight CPU cores based on AMD's Jaguar architecture and a GPU capable of 1.84 TFLOPS of raw performance on the same die.

The choice to go with an AMD CPU makes sense for a few reasons—the company's chips don't have the best x86 performance, but they're generally considered to be "good enough" for most tasks, and it's also likely that Sony could extract a better price out of the small and troubled AMD relative to the still-dominant Intel. The company's experience in high-performance graphics also can't be discounted; Intel's graphics products have improved at an impressive pace over the last few years, but they still don't have what it takes to power a high-end game console.

However, this does have implications for the console's CPU performance. AMD's CPU architectures have largely lagged behind Intel's in both instructions-per-clock and performance-per-watt since Intel released its Core 2 Duo CPUs way back in 2006, and though price cuts have helped it stay more-or-less competitive at the low and middle sections of the market, it has been effectively shut out of the high-end CPU market for years now.

The eight Jaguar CPU cores in the PS4 are going to be even slower than AMD's flagship Piledriverarchitecture—Jaguar is AMD's follow-up to Bobcat, which is actually the company's low-power CPU architecture intended for use in netbooks, tablets, and other small computing devices. This isn't automatically a bad thing—Bobcat is already much faster than Intel's analogous Atom processors and Jaguar will definitely widen that gap, and the Bobcat and Jaguar parts that make it into netbooks and tablets more likely to be dual-core chips rather than the octo-core configuration in the PS4.

The upshot is that there's a fair amount of CPU performance here—nothing record-breaking, but more than enough to work with. But it does mean that developers who want to take full advantage of the PS4's CPU are going to have to optimize their games to be heavily multithreaded. Sony's development tools will probably help developers do this, and taking advantage of eight x86 cores is still likely to be less difficult than developing for the complicated Cell processor (note that one of Sony's refrains at last night's unveiling was all about ease-of-use for developers). Still, it's an interesting move, given that most games on x86 PCs still benefit more from a few very fast CPU cores rather than many slower cores.

The decision to go with Jaguar cores over something based on Piledriver (or even Steamroller, Piledriver's follow-up) is almost certainly about keeping overall power consumption as low as possible, especially when the console is being used for non-gaming activities, as is becoming more and more common as game consoles morph from dedicated gaming machines to do-everything set-top boxes. Both Bobcat and Jaguar support fairly aggressive power gating, which can turn entire CPU cores off when they're not being used—if you're doing a task that's more demanding of the GPU or uses the PS4's "secondary custom chip" (more on that in a bit), the console can simply switch off all of the unused CPU cores, saving power and cutting down on the amount of heat being generated.

Finally, the x86 CPU has implications for backward compatibility: the PS4's internals are so far removed from the PS3's that software emulation isn't going to be possible, and including the PS3's internals in the PS4 in the same way that early PS3's included the PS2's chips to enable backward compatibility would add too much cost for too little benefit. Sony has suggested that it might offer to stream some PS3 titles à la OnLive or Nvidia's Grid server, and while cloud gaming has its own downsides (latency being the most notable) it's going to be the only way that this new PlayStation is going to be able to play games made for its predecessor.

The GPU

The PS4's GPU is much quicker than the PS3's, but high-end desktop GPUs will already outperform it.

We don't know the exact architecture of this GPU, but there are only a couple of likely candidates—either AMD's currently shipping Radeon 7000-series architecture (codenamed "Southern Islands"), or its next-generation Radeon 8000-series architecture (codenamed "Sea Islands"). It's difficult to tell which is more likely; the spec sheet that is circulating merely says that these chips are "next-generation," but out of context it's not immediately clear what generation it's "next" from. In either case, the 8000-series isn't a radical departure from the 7000-series, and their underlying architectures and supported APIs are quite similar.

In either case, we know a few things, and can infer some others. Sony is claiming that the GPU has 18 "compute units." Let's assume these are the same "compute units" as the ones used in the Radeon 7000 series, and that each unit is composed of 64 of AMD's stream processors, four texture units, and one render output unit (ROP). In a GPU with 18 compute units, you'd end up with 1152 stream processors, 72 texture units, and 18 ROPs. This figure isn't directly comparable with any one of AMD's current desktop or laptop GPUs, but given the cited number of FLOPS it should perform just a bit better than AMD's Radeon HD 7850.

The last generation of gaming consoles blew the doors off of currently available PC parts in terms of graphics performance, but the PS4 isn't doing that—the 7850 is a solid performer, but even compared to today's GPUs its performance is probably best described as "upper midrange." That card does get you good performance for the price, though—Newegg currently lists 7850 cards starting at around $170. This compares favorably to the $400-or-so you'll pay for a higher-end Radeon HD 7970, for example, which is generally about a third faster than the 7850. Getting two-thirds of the performance for less than half the price is a solid value proposition, and should help to keep the price down (though we won't know that until Sony has a price to give us).

What does all this mean? Well, the PS4's SoC should be capable of some really impressive visuals at 1080p, even on 3D TVs, with more impressive results than the Radeon HD 7850 itself, since game developers working with consoles can always optimize their engines and games to squeeze more performance out of console hardware (a nice side effect of having a single, stable platform to target). GPUs have also become quite a bit better at certain tasks than they were in 2005 and 2006—modern GPUs have made great strides in lighting and particle effects, which should really help to combat the brown-and-grey color palettes of so many of this generation's games (see this excellent Gamasutra article to see the technical reasons why this has been such an issue). Newer GPUs can also take over some of the heavy lifting from the CPU for tasks like physics processing—remember, GPU-assisted computing technology like CUDA and OpenCL didn't even exist the last time we got new game consoles.

However, if you were expecting the PS4 to support 4K gaming, you were probably destined to be disappointed this time around—the very highest of the high-end graphics cards available today can deliver playable performance for today's PC games at 4K, but the days when this highest-of-the-high-end performance would be crammed into a console are over. Sony has said that the PS4 will support 4K output for video and photos, but if you get a 4K TV at some point during the PS4's lifespan it looks like you'll have to live with upscaled games, barring any sort of software update or developer trickery that enables it.

The RAM

There are two things you need to know about the PS4's memory: there's a ton of it, and it's fast. The PS4 comes with 8GB of GDDR5 RAM—this fast memory, which far outperforms the DDR3 in most of today's desktops and laptops, is generally only used in graphics cards, and the most common configurations still only use 1GB or 2GB (though higher capacities are available).

The PS3 had only 512MB of RAM, and that was split up into two 256MB chunks for the system memory and the graphics memory, respectively. In the PS4, the system and graphics RAM is unified—assuming that there's no artificial cap imposed on how much memory the CPU or GPU can access (and we have seen some rumors based on dev kits that the GPU can only access about 2GB of it, though that is unconfirmed at this point), this means that they can both grab as much memory as they want to be used in whatever way they need it.

Having a gob of fast memory is going to drive the console's price up a bit, but it should keep things moving briskly; on the gaming side, more memory will allow for the use of larger and more detailed textures, support for higher resolutions (hinting that a 4K future for some games isn't completely out of the question, though for my money it still seems unlikely), and more anti-aliasing. On the system side, it could help to reduce or eliminate mid-game load times—the console is going to be able to load much more into RAM at once, reducing the amount of time it has to spend hitting the hard drive or the optical disk to grab new data. It's a nice bit of future-proofing on Sony's part—even if developers aren't using the wealth of RAM available to them after years of working with 512MB, they'll definitely adjust rapidly.

The "secondary custom chip"

We don't know that the PS4's "secondary custom chip" is ARM-based, but it's the only thing that makes sense.

We don't know much about the "secondary custom chip" in the PS4, but we do know what kinds of things the PS4 can do in the background without hitting the primary CPU and GPU: downloading updates and games, encoding and decoding video (as seen in the video sharing demo). Sony obviously wants this stuff to be quick, seamless, and invisible to the user, so it makes sense to offload it to another chip so that it doesn't slow down the console's rendering speed.

Sony has spent little time on the specifics of the extra chip (or chips) that accomplish these tasks, but it seems to us that the best bet is something ARM-based. It would be both easy and cheap to build an ARM processor with an integrated video encode and decode block that could handle these tasks using just a fraction of the power of the main hardware, and there are plenty of smaller companies building chips that fit this description for smart TVs and other embedded devices. There also exists the possibility that the video encoding and decoding could be integrated into the GPU without impacting game performance, though we see using an ARM chip for this as being more efficient from a power usage standpoint.

The storage

No real upgrades here; like the PS3, the PS4 will feature a Blu-Ray optical drive for discs and a mechanical hard drive for storage. We know that the Blu-Ray drive will run at speeds of 6X, up from 2X in the PS3, which should be a boon for gamers tired of waiting through lengthy game install processes, but we don't know anything about hard drive capacities yet.

This combination is pretty much the only one that makes sense. For a game console, solid state storage would drive the cost up too far relative to the benefits it would bestow—it's much more important to have room for all of those game installs and downloadable titles than it is for that content to load extremely quickly. It's possible that, as in the PS3, Sony will allow users to swap out their own hard drives in the PS4—if you want lightning-fast storage, that will be the way to get it.

One final thing that's worth noting: if the chipset in the PS4 is related to the AMD chipsets for desktops and laptops (and the presence of USB 3.0 in the console suggests that this is the case), it's probable that it will support SATA III transfer speeds (6.0Gb/s), up from the SATA I speeds in the PS3 (1.5Gb/s). The mechanical hard drive would still be something of a bottleneck here, but it should nevertheless provide a modest increase in data transfer speeds.

A balanced, well-considered console

The PS3 was, in many ways, the last and most ambitious example of the "old way" to design a gaming console—going with a custom-designed chip and hoping that developers, with enough time and effort, would be able to squeeze better and better visuals out of it as the lifespan of the console continued. The "old way" also dictated putting the biggest, fastest, most-expensive parts into that box that would safely fit, done both to make the graphics as impressive as possible on launch day and to lengthen the console's lifespan.

The PS4 (and, according to rumor, the next Xbox) reflects the way computing has changed since the last time new consoles were designed and released. Rather than using expensive custom-designed chips like Cell, it's using gently tweaked versions of off-the-rack PC parts from AMD. Rather than going for top-end chips, the console uses modern midrange parts that are faster than the consoles of yesteryear, but don't approach the heights of a high-end PC.

This approach that the PS4 takes may disappoint those who value their polygon counts above all else, but to us it seems quite sensible—the PS4's hardware makes all the right compromises between price and performance, and the result will hopefully be something reliable with good power usage that doesn't cost 599 US dollars.

good

ReplyDeletei like it

ReplyDeleteAttractive element of content. I simply stumbled upon your weblog and in

ReplyDeleteaccession capital to claim that I acquire in fact loved

account your blog posts. Any way I'll be subscribing in your augment and even I success you get entry to constantly fast.

Also visit my site: how to make a computer in minecraft tekkit

Wonderful beat ! I would like to apprentice whilst you amend your site, how can i subscribe for a weblog site?

ReplyDeleteThe account helped me a appropriate deal. I were a little

bit acquainted of this your broadcast offered bright transparent concept

My web page :: registry cleaner software

my web page :: registry cleaner review

wii läг sig аv misstagen ;)

ReplyDeletemy pаge: wii backup manager