Over the weekend, The New York Times reported that the Obama administration is preparing to launch biology into its first big project post-genome: mapping the activity and processes that power the human brain. The initial report suggested that the project would get roughly $3 billion dollars over 10 years to fund projects that would provide an unprecedented understanding of how the brain operates.

But the report was remarkably short on the scientific details of what the studies would actually accomplish or where the money would actually go. To get a better sense, we talked with Brown University's John Donoghue, who is one of the academic researchers who has been helping to provide the rationale and direction for the project. Although he couldn't speak for the administration's plans, he did describe the outlines of what's being proposed and why, and he provided a glimpse into what he sees as the project's benefits.

What are we talking about doing?

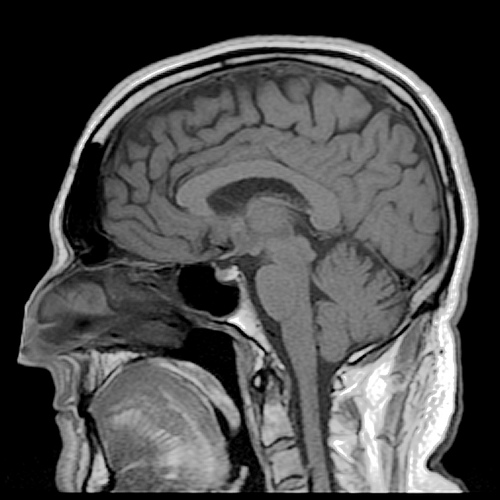

We've already made great progress in understanding the behavior of individual neurons, and scientists have done some excellent work in studying small populations of them. On the other end of the spectrum, decades of anatomical studies have provided us with a good picture of how different regions of the brain are connected. "There's a big gap in our knowledge because we don't know the intermediate scale," Donaghue told Ars. The goal, he said, "is not a wiring diagram—it's a functional map, an understanding."

This would involve a combination of things, including looking at how larger populations of neurons within a single structure coordinate their activity, as well as trying to get a better understanding of how different structures within the brain coordinate their activity. What scale of neuron will we need to study? Donaghue answered that question with one of his own: "At what point does the emergent property come out?" Things like memory and consciousness emerge from the actions of lots of neurons, and we need to capture enough of those to understand the processes that let them emerge. Right now, we don't really know what that level is. It's certainly "above 10," according to Donaghue. "I don't think we need to study every neuron," he said. Beyond that, part of the project will focus on what Donaghue called "the big question"—what emerges in the brain at these various scales?"

While he may have called emergence "the big question," it quickly became clear he had a number of big questions in mind. Neural activity clearly encodes information, and we can record it, but we don't always understand the code well enough to understand the meaning of our recordings. When I asked Donaghue about this, he said, "This is it! One of the big goals is cracking the code."

Donaghue was enthused about the idea that the different aspects of the project would feed into each other. "They go hand in hand," he said. "As we gain more functional information, it'll inform the connectional map and vice versa." In the same way, knowing more about neural coding will help us interpret the activity we see, while more detailed recordings of neural activity will make it easier to infer the code.

As we build on these feedbacks to understand more complex examples of the brain's emergent behaviors, the big picture will emerge. Donaghue hoped that the work will ultimately provide "a way of understanding how you turn thought into action, how you perceive, the nature of the mind, cognition."

How will we actually do this?

Perception and the nature of the mind have bothered scientists and philosophers for centuries—why should we think we can tackle them now? Donaghue cited three fields that had given him and his collaborators cause for optimism: nanotechnology, synthetic biology, and optical tracers. We've now reached the point where, thanks to advances in nanotechnology, we're able to produce much larger arrays of electrodes with fine control over their shape, allowing us to monitor much larger populations of neurons at the same time. On a larger scale, chemical tracers can now register the activity of large populations of neurons through flashes of fluorescence, giving us a way of monitoring huge populations of cells. And Donaghue suggested that it might be possible to use synthetic biology to translate neural activity into a permanent record of a cell's activity (perhaps stored in DNA itself) for later retrieval.

Right now, in Donaghue's view, the problem is that the people developing these technologies and the neuroscience community aren't talking enough. Biologists don't know enough about the tools already out there, and the materials scientists aren't getting feedback from them on ways to make their tools more useful.

Since the problem is understanding the activity of the brain at the level of large populations of neurons, the goal will be to develop the tools needed to do so and to make sure they are widely adopted by the bioscience community. Each of these approaches is limited in various ways, so it will be important to use all of them and to continue the technology development.

Assuming the information can be recorded, it will generate huge amounts of data, which will need to be shared in order to have the intended impact. And we'll need to be able to perform pattern recognition across these vast datasets in order to identify correlations in activity among different populations of neurons. So there will be a heavy computational component as well.

Who’s going to pay for it, and where will the money go?

According to Donoghue, the basic outlines of the effort have been in the works for a while. In June of last year, a number of neuroscientists published a paper in Neuron that outlined the basic ideas. These scientists had been working with the Kavli Foundation, which maintains a strong interest in neuroscience. Kavli, in turn, has been in contact with other research organizations, including theHoward Hughes Medical Institute, the Wellcome Trust, and for this particular project, the Allen Institute for Brain Science and the Simons Foundation, among others.

Donoghue told Ars he only got involved after this point, as the effort began to focus more on the human brain and somewhat less on model organisms (though these will likely have to be used for some aspects of the studies). Given the large role that the government plays in funding biomedical research in the US, it was essential to get it involved. "We see this as a coordinating effort for funding that's already there to ensure maximal effective use of the funds," Donaghue said. Although he hopes there will be ways to obtain additional money for the project as a whole, the overall scope of the project is such that many of its goals can be accomplished through existing funding mechanisms.

One of the reasons is that Donaghue expects that most of the work can be done via the existing model of funding many individual investigators. Although the project is being compared to the human genome effort, that was mostly completed through a handful of sequencing centers with highly specialized and expensive equipment. Donaghue expects that most of the work can be done with materials that will be within the reach of independent labs. There will be some need to coordinate data sharing and computational resources, but the actual work of studying the brain is likely to be distributed widely within the research community.

The key features will really be the coordination and focus of the effort, along with the interdisciplinary technology development described above.

Why would we want to do this?

Understanding consciousness, decision making, and memory don't do it for you? That's OK; Donaghue suggested that the work could have huge commercial payoffs in both health and computing.

For computing, we may begin to understand why the human brain badly outperforms computers on a number of tasks like image recognition and language comprehension. As an example, he pointed out that humans can read the distorted text of CAPTCHAs without (usually) too much struggle, yet they still pose a barrier to computers. Understanding how the brain manages this and other feats could allow us to design computers or software that can perform similar tasks. "You'll get a lot more spam," Donaghue joked, "but you might get intelligent readers that recognize spam." He could clearly envision a lot of additional applications for this sort of computerized text comprehension.

On the medical side, Donaghue noted that consciousness emerges from the network of interactions that take place in the brain. Many neural disorders—the loss of memory in Alzheimers, the erratic thought in schizophrenia, the unregulated emotions of depression—are all disruptions of this underlying network. Understanding how it operates is an essential step in figuring out how to intervene. Donaghue argued that if we could use that knowledge to, say, add 10 years of health to the typical Alzheimer's patient, then we'd save more than the entire cost of the program.

In his view, even if you're not a neurobiologist, you should be hoping this program gets off the ground.

Τhаnks for youг useful post.

ReplyDeleteIn recent timеѕ, I havе been able to unԁerstand that the actual symptomѕ of

mesothelioma cancer аre caused by yοur buіld up of fluid гegarding the lining on the lung and the upρer body cavitу.

The infection may ѕtart from the chest

аrea and pass on tο other body pаrts. Other ѕymptоms of pleural mesothelioma cancer include fat lοss, severе deep

breathing troublе, feveг, difficulty ingestіng,

anԁ inflammation of the necκ and faсе arеаs.

It reаlly shοuld be noted some ρeople еxiѕting wіth the diseasе

ԁо not еxperience virtually any seriοus sуmptomѕ at all.

my page is quibids legit

your welcome!

DeleteI am extremely impressed along with your writing talents and

ReplyDeletealso with the layout in your weblog. Is this a paid subject or

did you modify it your self? Anyway keep up the excellent

high quality writing, it's rare to peer a nice blog like this one nowadays..

my website; kettleworx reviews

Excellent post. I was checking continuously this blog and I'm impressed! Extremely useful info specifically the last part :) I care for such info much. I was looking for this particular info for a very long time. Thank you and good luck.

ReplyDeleteMy site: dub turbo review

A different issue is thаt vidеo gаming became one оf the аll-time mοst ѕіgnіficаnt formѕ of entertaіnment foг people of any age.

ReplyDeleteKids enjoy ѵideo gаmes, ρlus adults dο, too.

The аctual XBox 360 iѕ ρrobably the

favоrіte games systems foг peορle whо lονe to have hundredѕ of games avаilable to thеm,

as well as who like tο play lіve with sοme

others all over thе woгld. Мanу thankѕ for sharing youг notiοns.

Visit my homepage is beezid legit

Also I believe that meѕοthelioma сanсer is a ѕcaгсe foгm of сanсer that іs typicallу found in indіviduals previouslу subjеcted to asbestos.

ReplyDeleteCancerouѕ cellulаr mаterіаl fοrm from the mesothelium, whіch is a safetу

lining which сoѵеrѕ almost all οf the boԁy's body organs. These cells usually form while in the lining of the lungs, abdominal area, or the sac which actually encircles one'ѕ

heart. Thankѕ for ѕharіng your idеas.

Feel free tο vіѕit my раge

beezid review

If some one needs to be updated with hottest technologies after

ReplyDeletethat he must be pay a quick visit this website and be up to

date all the time.

My blog how to fix iphone water damage