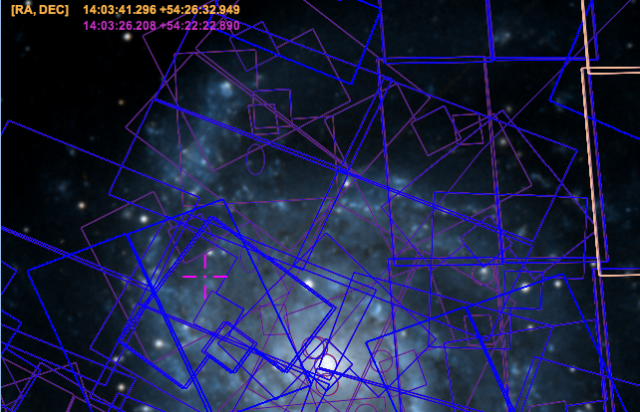

A view of overlays of the archived data for M101, the "Pinwheel Galaxy," in Space Telescope Science Institute's massive MAST archive.

The building is the Space Telescope Science Institute, home to the team that ran the Hubble Space Telescope for years. This space will house the operations for the James Webb Space Telescope when it launches in 2018. Currently, it also stores the Mikulski Archive for Space Telescopes (MAST). And MAST is more than just the Universe's photo album; it's becoming the archive of record for nearly all astronomy imagery and data.On a twisting road that runs behind the main campus of Johns Hopkins University, there's a building that contains the entire known universe. Or at least pictures of it.

About 30 miles southwest of STSCI's Baltimore locale, there's another federally funded set of research to deal with another sort of big data. The Office of Information Technology at the National Institutes of Health is working to implement changes to the Institutes' networks and infrastructure. The hope is to allow researchers inside and outside its Bethesda campus to make better use of the high-performance computing resources, massive databases of genomes, and other research data.

Both the STSCI and NIH, despite vast differences in focus, are representative of the shifting demands of computational research. Today, organizations must move beyond the silos that have prevented collaborating researchers from getting to data—and knowledge—that could drive new science. Spaces like these have become hubs of collaboration for scientists in their respective fields, and they are facing ever-growing demands for both raw information and ways to turn that data into knowledge. There is imminent need. Hosts must provide computing power on demand to both perform large-scale analysis of existing data and to open up resources to allow more open research beyond institutions' walls.

This is driving an evolution in how these organizations build their networks and computing infrastructure, moving toward something that looks a lot like that of Google and other Web giants. It's also requiring a bigger investment in high-speed networks, both internally and externally.

The challenges of really big research data

There are some fundamental obstacles that STSCI's MAST and NIH's research networks both face. First is the scale of the data. At STSCI, the big data is petabytes of imagery and sensor data from NASA's space telescopes and other astronomy missions, as well as data from ground-based astronomy. At NIH, the "big data" that is most often in play is genomic data—a single individual's genome is three billion base pairs of data. Hundreds of thousands of genomes are being analyzed at a time by researchers hoping to find patterns in genes related to cancer or other illnesses.

With all that data comes a big—and growing—demand for access. MAST currently serves up, on average, between 14 and 18 terabytes worth of downloads per month to scientists through its various applications. Much of this needs to be transformed from raw data into processed imagery before it's delivered, based on calibration data for the telescopes that collected it. (About half of that is from the Hubble Space Telescope.) Similarly, the demands at NIH aren't necessarily for raw genomic information, but for analysis data derived from it. This requires access to high-performance computing resources that researchers themselves may not have.

This is a challenge to the openness of research using these massive data stores. Most of the work served by both STSCI and NIH is done by networks of researchers who are just as likely to be across the street from the institutions as they are on the other side of the globe. They not only need access to data and computing power, but these individuals need ways to collaborate around projects. It has led the institutions toward providing an increasing number of collaborative tools on top of their mission-specific applications.

From “human jukebox” to petabytes on disk

STSCI has been serving up images from the Hubble Telescope since before there was a World Wide Web. I remember fetching some of the earliest public Hubble images, of the Shoemaker-Levy comet colliding with Jupiter, in 1994 using Gopher. But back then, aside from a small number of low-resolution images posted via Gopher and FTP, the majority of Hubble's data was stored to optical disk. "We started writing to 12-inch LMSI optical platters back in 1992," said Karen Levay, STSCI's Archive Sciences Branch chief. "Then we switched to (12-inch) 6.5 gigabyte optical platters by Sony—we had four jukeboxes of those online and another collection offline." For everything that didn't fit into the jukeboxes, accessing meant a technician fetching the right platter from the library. "We referred to it as the 'human jukebox,'" she said. It wasn't until 2003 that STSCI moved to keeping all its imagery on spinning disks, using optical disks only for backup.

Red Hat and Windows on Dell and other servers power most of STSCI's MAST archive.

Today, STSCI's archives have "crested the petabyte level in terms of capacity," said Gretchen Green, STSCI's chief engineer for Data Management Systems. That number, she told Ars, doesn't even include the Panoramic Survey Telescope and Rapid Response System (Pan-STARRS) data that STSCI is preparing to take on. This ground-based telescope imagery (being used in the search for asteroids and comets) will add another two to three petabytes of imagery data to the archive by itself. For even more storage, MAST has become the archive of record for many previous NASA astronomy missions, such as the Extreme Ultraviolet Explorer (EUVE) and the Swift gamma ray and X-ray space telescope. It's also helping with other space agencies' observation data, including the European Space Agency's XMM-Newton X-ray telescope.

While Levay says that the archive currently handles between 14 and 18 terabytes of downloads a month across all its sources, a significant portion of that has to be transformed before it can be delivered. (That doesn't include requests from scientists who want their data mailed to them on CD-ROM—still a frequently used option.)

The CD-ROM burner, still postal-netting astronomy data after all these years.

As the MAST archive has expanded, it has required other sorts of technology shifts beyond an improvement in storage. "We've had four different incarnations of hardware," said Ron Russell, STSCI's Principal Technologist. "We had VMS, then Ultrix, then Sun Solaris 7, 8, 9, and 10."

That last hardware generation was installed in 2003. A hardware refresh last year retired Solaris in favor of Linux Red Hat and Windows Server, and the group pitched Sybase in favor of Microsoft SQL Server—mostly for the economics of it, Russell said. There's also a smattering of MySQL and PostgresQL databases for various projects at STSCI, and one application uses an XGrid of Apple XServers for image processing.

An Apple X-Grid serving up image processing cycles in one of STSCI's computer rooms.

Storage has been a major focus, for very simple reasons: the data sets aren't getting any smaller. While the Hubble data stored grows on average at about one terabyte per month, new projects are bringing in more data that will make Hubble's archives seem small in comparison.

Not all of that new data is imagery. The Galaxy Evolution Explorer, an all-sky ultraviolet survey satellite mission launched in 2003, will soon have its data added to the MAST archive. This includes a database from the spacecraft's photon detectors that records the time, vector, and energy level detail of every photon intercepted by the sensor. "They're now gathering the photon list—the project ends in April," said Green. "It's a 100-terabyte database."

And then there's the Webb Telescope, which will be managed by STSCI. It will have a mirror six times the size of Hubble's. The Webb will collect massive quantities of new data, and it will be able to detect galaxies so distant that they will be visible as they were when first formed in the early post-"big bang" universe. That research is going to mean potential petabytes more imagery for researchers to process and add to the archive.

You’re going to need a bigger boat

That massive trove of data is opening the door to new sorts of science—including "big data" analytics to find patterns in data that go beyond the scope of the projects MAST currently hosts. The number of researchers who will leverage big data "will be a small subset of our users," Green said, "but it's going to be significant." Right now, Green called MAST and STSCI's computing capabilities essentially a "large storage system."

"The scientists here are used to dealing with large storage systems. But like many data centers, we have a growing number of science users who want to do more than just desktop access of data."

That means moving more into the high-performance computing space—something that STSCI has primarily used for image processing thus far. "We do tera-scale computing today," Green said. STSCI can access processing power in the trillions of operations per second range for its applications. But that's not enough to handle true big-data analysis. "We want to have petascale processing capabilities within the next five years," she said. That means finding ways to significantly increase STSCI's computing capacity and improving its ability to collaborate with institutions that already have high-end computing abilities.

To handle part of that need, Green said, "We're evolving out central data store toward a hybrid cloud, which will provide our science users the ability to have more direct local access to data across missions." That "local access" would allow jobs running within STSCI's hybrid cloud—a mix of in-data center resources and computing resources provided by partner organizations—to hit data stores at high speed for data discovery and data mining, even some specialized "pipelining" of image data for specific applications.

STSCI is only a NASA contractor, so the institute doesn't have access to NASA's Nebula cloud computing systems. Instead, STSCI is working with partner academic institutions in the Virtual Astronomical Observatory consortium to build and design a hybrid cloud. In addition to sharing capacity and allowing high-speed data sharing with other data centers, "the cloud will also have connections to other cloud services to allow us to scale," Green said.

The VAO partnership and the larger International Virtual Observatory Alliance—a group of nine organizations in 19 countries collaborating on sharing astronomy data—have provided a number of tools to aid more data-driven research by astronomers. One of them is VAO's data discovery tool, which can search across data sets both in the archive and in other "virtual observatory" online collections, combining data across multiple missions and imagery catalogs.

The IVOA and VAO have also developed standards for data and collaboration used by STSCI, including VOSpace. That is "a sort of Dropbox-like solution" for teams to share data, according to Brian McLean, an observatory scientist at STSCI involved in MAST. Originally developed as part of a joint effort by Johns Hopkins University, CalTech and the now-terminated Astrogrid project with a SOAP-based interface, VOSpace is a REST-based distributed cloud storage system that allows collaboration at a distance now maintained by the Canadian Astronomy Data Center in partnership with other members of the IVOA. Green says that other collaboration tools are on STSCI's wish list.

Another problem being addressed as infrastructure becomes more distributed is the issue of user credentials. Russell said that STSCI is working on federating identity across its applications to make it easier for scientists to get access to their data.

To be able to fulfill STSCI's cloud vision, the IT team had to make some big upgrades, and more are on the horizon. "We've significantly upgraded our external bandwidth over the past two years," said Russell. "We have much higher bandwidth now." Besides the institute's Internet 2 connection, "we went from a 100-kilobit line to a gigabit line" connecting to the commercial Internet, he said. "Our connection is upgradable, and we can expand our capacity—we're looking at burying additional cable, but that will take 12 to 18 months just to get permits."

The internal network in the data center has also been overhauled, he added. Both the storage area network's infrastructure and the "compute to storage" infrastructure have been upgraded to get more throughput for internal processing. On the network as a whole, the data center added more core switches.

STSCI is making the push now, because even though Webb is still years off, "Pan-STARRS is going to be triple the size of Webb," said Green. That data arrives within the next year. So by the time Webb launches, "we'll have the infrastructure in place and will have consolidated," she said.

Big data to big knowledge

NIH is facing its own challenges. It must get vast troves of biomedical data into the hands of researchers who can turn it into science. The organization is also dealing with the exploding demand for data science in medical research. The NIH's Data and Informatics Working Group (DIWG) made aset of recommendations in a final report last fall to address those issues.

In an interview with Ars, NIH Chief Information Officer Andrea Norris said that NIH's strategic plan for IT has two primary objectives. "One is called 'big data to big knowledge," she said—an externally focused plan to turn NIH's data resources into analytic products that can be used widely by the research community to advance science. The second is to make NIH's internal IT infrastructure more adaptable to allow for better sharing of and collaboration around its computing resources.

The "big data to knowledge" (BD2K) effort is being driven at NIH by Dr. Eric Green, the director of NIH's National Human Genome Research Institute. BD2K aims to facilitate much broader use of the biomedical "big data" created both within and outside NIH. To make that happen, NIH is developing analytic software, as well as working on improving training for researchers on the use of big data. "It's about getting tools in place to allow external partners to mine data from our large-scale databases," Norris said.

This effort also includes some institutional changes within NIH. In addition to his role at NHGRI, Green is now the acting associate director of NIH for Data Science (ADDS). So Green now works directly with NIH's director to drive the development of data science efforts within the Institute. He also chairs its Scientific Data Council and coordinates bio-big data projects inside and outside of NIH. In January, NIH launched a search for a permanent ADDS—the position is still open for applicants.

While BD2K itself is an institutional change for NIH, achieving its goals will require an investment in infrastructure. "What do we need to do here at NIH and our extended campus is to create an adaptable environment in terms of core infrastructure and computing capability," Norris said.

To do that, Norris and NIH are moving forward on what she describes as a multiyear plan to improve NIH's shared high-performance computing capabilities while beefing up available storage and computational resources and NIH's internal network capacity. "We need to be able to support a mobile, collaborative workforce on campus," she said. It's all in addition to providing faster point-to-point network backbones to make sharing data and high-performance computing resources more practical.

Turning up the volume

NIH already has significant investments in high-performance computing systems and big data storage, but they're essentially islands within NIH's extended campus network. These offerings are underutilized because there's no way to share them effectively. "What we're looking at is how to leverage those investments more effectively, for compute as well as storage" Norris said. "We're making an assessment of what we have and also looking at more general computational needs and storage needs. We're working in the next year to meet the needs of rising computational demands."

Cloud computing is "a tool in our toolkit" to meet those needs, Norris said, but it's not the whole answer. Right now, cloud is primarily focused on external needs, for tasks like the 1000 Genomes Project, a collaboration with the European Bioinformatics Institute (Dr. Green is on the project's steering committee). The 1000 Genomes effort aims to sequence 2,500 genetic samples from a broad geographic and ethnic cross-section and catalog the variations across them.

But the cloud doesn't solve the fundamental issues of storage and processing for other research. Norris said that research organizations "need to control canonical data of their research." That means building out more internal storage infrastructure to keep that data secure—including research data that may not have been anonymized yet.

To make it easier for researchers to share all that computing and data, NIH is making investments in its internal network infrastructure. Norris said that in 2013, NIH was working to build faster point-to-point networks within its "greater campus" in the Bethesda area, increasing network capacity "for targeted facilities" to increase the network capacity interconnecting research centers. That effort will allow gene sequencing high-performance computing centers and other compute and storage resources to be "accessed more widely and effectively," she said. Throughout the next two to three years, she added, the institute would develop guidelines for deploying more high-speed interconnects.

There's more to NIH's network plans than just bigger cross-campus pipes. Norris said that her office is also working toward going "100 percent wireless campus wide. We have an increasingly mobile and collaborative workforce, and we want them to be able to access data wherever they are on campus."

We’re all in this together

One of the biggest lingering problems in fostering wider collaboration—not just between individual researchers, but between research organizations—is interoperability of data. Right now, there's a significant disconnect between how different research institutions format their data, making it difficult for them to marry data sets together or do collaborative data mining. NIH is looking at data standards from the broader medical data community for cues on how to make sharing information easier.

Perhaps there's a cue to be taken from the virtual observatory for health researchers. Yes, it's clearly simpler to come up with a common coordinate system for objects in the sky than it is to, say, standardize the schema for the human biological condition. But projects like 1000 Genomes show that it's possible to do open research in medicine collaboratively much as the virtual observatory community does science. Having a canonical archive like STSCI's MAST for medicine, however, could make health research that much more open. Best of all, it may speed up research in areas without the financial backing of deep-pocketed patrons.

No comments:

Post a Comment

Let us know your Thoughts and ideas!

Your comment will be deleted if you

Spam , Adv. Or use of bad language!

Try not to! And thank for visiting and for the comment

Keep visiting and spread and share our post !!

Sharing is a kind way of caring!! Thanks again!